2025 and tech

This [almost served] as a bit of a overview of the some of my biggest thoughts and projects with technology in the year 2025

This post serves as a bit of a overview of the some of my biggest thoughts and projects with technology in the year 2025

Table of Contents

- The Year I Finally Stopped Using ChatGPT

- Is Google Going to Win it All?

- AI Imagery and More Google Glazing

- From iOS to Android and Back Again

- Baby's First Homelab

- Music?

- Video?

The Year I Finally Stopped Using ChatGPT

While I've tried out just about every major release from the three major US labs and messed around with plenty of less-popular and/or open-source models, the model I've always come back to is ChatGPT.

Two big reasons why:

- The best model. Even if Claude or Gemini was updated with a model that beat the GPT of the month, it was never substantial enough to bother completely jumping ship. And there was always a strong chance that the next release from OpenAI on the horizon would wipe out the gains anyways.

- The built-in features. While there are plenty of ways to hack whatever you want via API with other models, they've been consistently outclassed by the native-capabilities of ChatGPT. Not only did my subscription give me access to a best-in-class multi-modal model, but I get advanced voice-mode, I get projects, I get an excellent Mac app that can plug directly into applications like Terminal without needing to bother with copy-paste. OpenAI were always a few steps ahead with launching capabilities within ChatGPT whereas other labs either were either late or they existed outside of the primary UX.

And that's how things remained for almost the entirety of 2025. I tried other big releases on and off, even paying for Google AI Ultra at the introductory price the first three months, but still had no reason to leave ChatGPT.

Then came Gemini 3.

Just under a month ago at the time of this writing, Google dropped a model that proved that they truly are the AI company. Anyone who knows anything about AI knows that Google was traditionally the AI company since the beginning. While they've conducted many random projects and killed almost as many as that, AI has long been at the core of Google. In fact, if you truly don't know anything about AI beyond surface level (god bless you) you might not know that the paper which essentially the entirety of ChatGPT was built off was written by Google DeepMind.

There was quite a bit of hype leading up to this release in the AI community but I'd personally become quite disillusioned with the hype coming out of AI circles to the point where I really felt like I didn't even want to bother trying new releases anymore. Even when I listened to the, in my opinion, least-emergency emergency episode of the Hard Fork podcast about the release, I wasn't pulling over in the nearest parking lot to ensure I got access as quickly as possible as I'd literally done in the past.

But that all changed when I watched this video from my most trusted opinion-haver in the AI space, Philip from AI Explained who was truly impressed by the models capabilities. Not only was this model the first model resembling more of a jump than a shuffle forward since GPT-3.5 to 4, but it represents a potential breakaway point for Google as an AI lab.

From the very first prompt I gave Gemini 3 I was already sold. I took a recent prompt to ChatGPT asking if pursuing an accelerated IT bachelors/master's degree would be worth my time. As expected, ChatGPT told me that it was a great idea! But only if I could get it done in a quick enough time frame. It also gave me a decent little roadmap for how to get it done.

When I gave this same prompt to Gemini 3 what shocked me first was that it didn't just base it's answer based purely off my prompt, it did research to get more context on the situation. In doing so it mentioned that while the degree I mentioned isn't a bad idea, that a bachelor's in network engineering might be better suited for my goals and gave me a breakdown of why. When I pushed back a little and said I wanted to get a master's and would it make sense to do them separately rather than this accelerated program, Gemini gave me a detailed breakdown of why the network engineering degree is the better play and showed the time I'd be saving in the accelerated program would not be worth the fact that I'd be getting significantly less of the coursework I'm actually interested in.

As I continued to test Gemini 3 throughout that week on various things I realized that this was the first time I was even bothering to compare answers with ChatGPT. I had no question in my mind that the answers I was getting we're significantly better and more trustworthy than any I'd experienced with my years of ChatGPT.

It's now nearing the end of December and for the first time since November 2022 I can say I've barely touched ChatGPT except for a brief period I was still using the Mac app, to conduct a marshmallow in a jar guessing competition among models, and to cancel my subscription.

As a final note on this section, while Gemini is probably around 85% of my AI workload, I actually started using Claude Opus 4.5 quite a bit too. Not only is it an extremely intelligent model, but I primarily use it rather than Gemini when I want a more conversational model. Such as when asking for interpersonal or career advice, journaling, and philosophical pondering.

Is Google Going to Win it All?

Let's consider the three major US lab's advantages:

OpenAI has the advantage of being the first, ChatGPT has basically become the Kleenex of chatbots. They also have Sam Altman, allegedly the most persuasive fundraiser and talent scout of his generation. Until very recently OpenAI also had the best model(s) available globally.

Anthropic has the advantage of maintaining a consistent research and safety track record that appeals to top AI researchers, many of whom intersect with EA and AI-Safety circles. Anthropic has also reportedly locked-down much of the enterprise AI market and will likely not run out of willing multi-billion dollar investments anytime soon. Claude Code had cornered the vibe and assisted coding space and Claude is widely considered to have the best personality and emotional intelligence.

Then there's Google. Google you might say has the everything advantage.

- Talent: Google has and attracts the best in the world.

- Hardware: Google designs their own chips. The do not rely on Nvidia for compute.

- Data Centers: Google has operated data centers for 20 years and is experienced in building the most advanced facilities in the world.

- Data: Google essentially invented the concept of mass user-data collection.

- Model: Google arguably has the most powerful model along with multiple adjacent frontier-AI projects (World, image/video, science, etc).

- Money. While OpenAI is begging for more subscriptions, considering ads in SlopTok and conducting circular fundraising deals, Google has one of the best businesses of all time. In Q4 2022, the fiscal-quarter ChatGPT was released, Google brought in $76.05 BILLION dollars in revenue. What about more recently in Q3 2025? $102.3 Billion. OpenAI is a private company so we don't know for sure but they're estimated to have brought in maybe $13 billion in the entirety of 2025, though Sam Altman claims it will be closer to $20 billion.

While the other guys are creating literally the biggest investment hype train of all time by a country mile to secure unsustainable investment numbers, Google is just chilling already making more than enough money on the side to fund AI development.

Since they were caught with their pants around their ankles in 2022, Google has seemingly struggled to re-cement themselves at leaders in artificial intelligence. In those early days when it was still unclear just how fast OpenAI would advance their own model, it seemed feasible that the giant might be felled. Now in 2025, for better or for worse, it appears the giant is now fully awake and prepared to wipe destroy the tiny David's that once seemed such a threat.

My first realization of Google's coming dominance was this blog post written in April of this year, well before I was impressed enough to switch to Gemini entirely. By the end of 2026 I think it's a safe bet to assume Google is even further ahead than they are now.

AI Imagery and More Google Glazing

This brown cat is one of the first images I ever generated. It's hard to believe that 3 years ago I was giggling with excitement that I could just type words and an image would pop out! I mean it looks like shit but it's certainly a brown cat and there's sorta a butler thing happening.

In 2022 this stuff wasn't really all that controversial, it was just a fun little experimental toy! But in 2023 things changed with tools like Midjourney v6 which could create genuinely artistic-looking images:

Also in 2023 was DALL-E 3, the first model you didn't need to be a prompt engineer to get specific output and could occasionally render text that wasn't a garbled mess.

Now that anyone could write a simple prompt to generate an image often good enough to be mistaken for human-crafted art, the AI imagery ethical debate took off.

I'm generally cautiously optimistic with technology and while I understand the arguments against generated imagery, I feel that much of the debate is overblown. I didn't and don't see any reason that generated imagery would devalue human-made imagery but that's a topic for a different post. Point is, by late 2023 generated imagery was good enough to the point that people were concerned, but still bad enough that it was obvious to everyone but your grandma and not actually useful in 99% of cases.

That is until March 2025 OpenAI finally released 4o Image Generation (I can't believe that was this year).

There were four things that really separated this advancement from every other: prompt adherence, visual fidelity, text, and photo/character consistency.

The Classic: "make this cat look anime" - ChatGPT 4o in March 2025

Character/Photo persistence: "Make a trading card with this dog as the character in the drawing. it's name is enzo.add in other details to make it look like a more realistic card. holographic card" - ChatGPT 4o in March 2025

These four capabilities were in their larval stages with DALL-E 3, this step up was almost shocking. For the first time since the release of the original ChatGPT I heard buzz about AI outside of tech circles.

I actually almost thought that this would be the first time we had an image model that could fundamentally change creative job outlooks because of how good it appeared at first. But, like many models, the more you use it the more the cracks began to appear. It was certainly much better than anything that had come before but it still had plenty of typos, hallucinated entirely new characters than what you provided, and many more flaws that would create nearly as much work fixing things as if you just did it yourself from the beginning (assuming you have some degree of artistic ability).

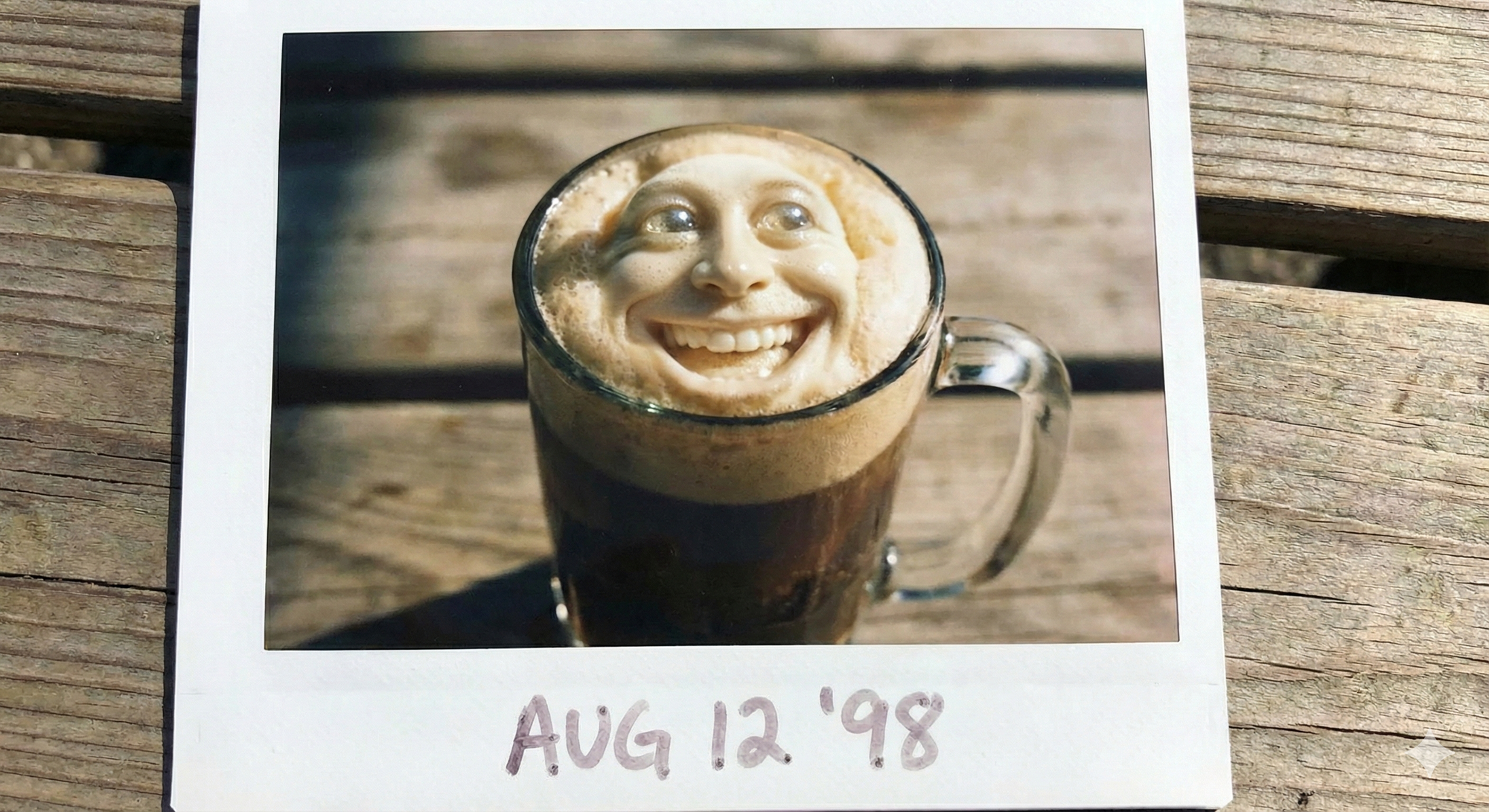

Google's answer to 4o was Nano Banana and while on the surface it had all the same capabilities it was just straight up not it. I could go into detail but I think this image comparison for the prompt "make me a photo-realistic Polaroid photo of a root beer float with a funny face on it" will be enough:

Can you guess which model is which?

So for most of 2025 we had much more capable image generation but with a distinct lack of anything I'd classify as genuinely useful. But, as has become the theme of late 2025, then Google came out with Nano Banana Pro.

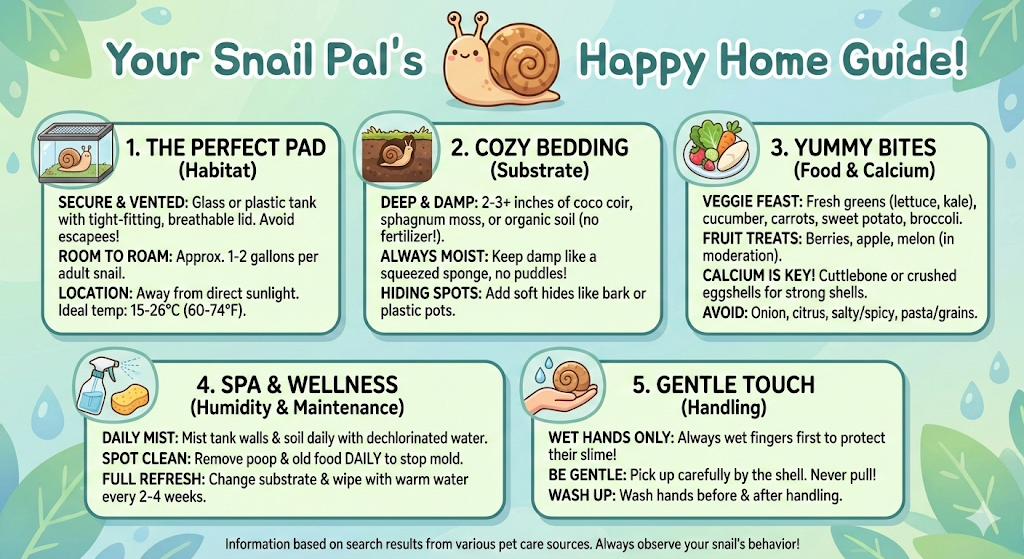

Nano Banana Pro is the first model I'm confident in calling actually useful. Let me just a list a few things that make me say that: text with less typos than I make myself, infographics with actual information, tiny details in prompts accounted for, visual fidelity, photo editing and manipulation, and all this while being significantly faster than 4o. I'll let the images speak for themselves:

"Photo realistic polaroid of a root beer float with a funny face on it" -> "The root beer float itself should have a face as if it is alive" | I like that it made the funny face as if an ice cream shop employee squirted one on there but that edit... nightmare fuel

While I can't say the model is perfect, it has effectively solved 99% of the issues with previous image generators.

What does this mean moving forward? I have a few ideas for future blog posts on this topic but my biggest takeaway so far is how little has changed despite this capability.

We live in an era where a photo or video of literally anything happening can be generated by anyone with an internet connection. While there are stories here and there of AI imagery causing actual real-life issues, I think the most shocking part of all of this is how quickly society has adapted to this being possible. I think if you asked most people in 2021 what would happen if you anyone could create a fake image or video nearly indiscernible from reality most would foretell complete collapse.

Society does indeed feel in a precarious place but not because I can generate a video of a political figure shitting on a constituent wearing a shirt of the opposing party. Time will tell if further advancements in fidelity and compliance will cause wide-spread destruction but I'm betting on no.

From iOS to Android and Back Again

My last foray into Android before this year was with the Galaxy Note20 in 2020. Really cool phone which I kept for about a month before switching to an iPhone SE for some reason.

Since then I've stayed on the iPhone train and, starting with the iPhone 13 Pro, upgraded to the new iPhone every year since.

But man did I start hating iPhone.

I generally quite enjoy the Apple ecosystem, it's where I started and it often still feels magical. Plus I very much align with Apple's privacy philosophies. But Apple has been on a slow trend downwards succumbing software stability failures, over-promising, and releasing products/features that just... suck.

I finally had it with the iPhone 16 because the iOS keyboard is actually completely broken (and still is!!!) and the new Camera Control button basically just functioned as a way for me to accidentally open my camera, completely fuck up my camera settings while trying to take a quick photo, or accidentally open the under-powered Visual Intelligence feature (useless compared to every other visual intelligence option).

All while all these cool android phones were coming out with features that weren't just gimmicks anymore and hardware with crazy specs that broke Apple's traditional advantages with strict integration between hardware and software.