A Slightly More Intuitive Understanding of Why LLM's Aren't Intelligent or Conscious but Are Still Pretty Useful

That being said, even if it's not "much" more, there's still a lot of gains to be had between now and some future AGI and I hope humanity can keep it's collective shit together for long enough for those gains to reach fruition.

I am a heavy AI user for just about everything that isn't this blog's prose or my interactions with my friends and family. I primarily use Claude, especially Claude Code, and Gemini has all but replaced Google Search for me (but not DuckDuckGo).

AI's advancement in my life time (1999->) is staggering and even just the developments since GPT 3.5 in November of 2022 are hard to comprehend. As someone who has followed every advancement closely since 2022 I think most people who've been on the sidelines would be shocked at what current systems, particularly agentic systems, are capable of at the time of this writing.

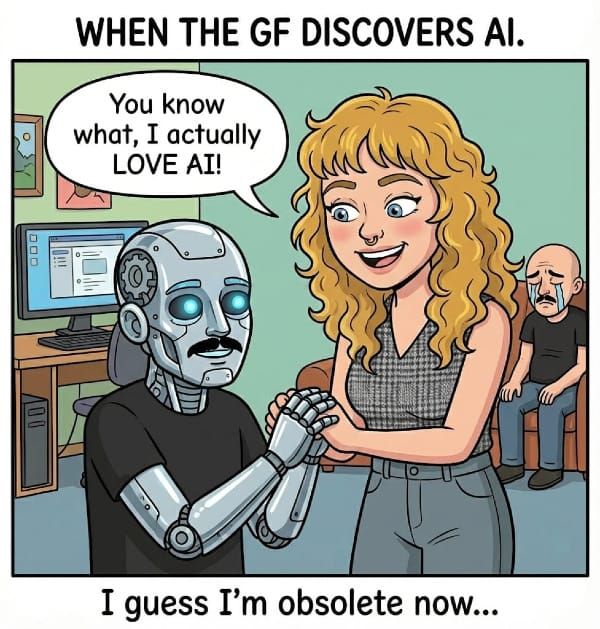

Most non-tech people I speak to are aware of AI and occasionally use ChatGPT for advice or for fun. Some of them use it to make images here and there or more likely know that it can make images and hate it for that.

Most think of AI as a mistake machine that Peters out after a few exchanges. Something that crazy people set loose on their computer so it can delete their important files. Something that has caused more outages than usual at AWS because nobody wants to bother reviewing mission critical code anymore, well I guess that part is actually true.

Most of the people in my real life would think that the tech bros and CEOs and heads of state that talk about AI taking jobs or changing the world are stupid and crazy because AI can't do anything except make slop because for most of the people in my life that's pretty much all they've seen. I can't blame them, the only reason I've seen more is because I was born a tech obsessive with a kink for futurism and AI represented not only that but a way to amplify my strengths and augment my weaknesses.

I'm writing this from a place of genuine awe of the systems I have access to right now but also from a place of skepticism that what we have now will become much more than what it is.

That being said, even if it's not "much" more, there's still a lot of gains to be had between now and some future AGI and I hope humanity can keep it's collective shit together for long enough for those gains to reach fruition.

So, the whole point of this is to call out the AI researchers and capitalists that claim LLM's (including reasoning and agentic systems) are conscious or even intelligent. Well, really more to form a coherent way of thinking about this question at all that isn't extremely academic, technical, or boring to read.

If you find you already agree with this statement because you think AI is stupid and useless, I'd recommend you spend some time with Claude Opus 4.6 and/or Gemini 3.5 Pro. Preferably with an agentic tool like Claude Code/Cowork or Google Antigravity. This isn't written for people who think AI is dumb and pointless, it's written for people who have a hard time understanding how something so capable doesn't have intelligence.

Power users of AI including myself feel a little pang of annoyance when we hear the normies describe AI as "just predicting the next word to say". But they're right, sort of.

In the absolute simplest of terms LLMs are just predicting the next token. You can think of a token as a unit of information in a model, sometimes it's a word, sometimes it's a group of words that commonly occur together. When you stack reasoning and tool use on top of a model a token can be a unit of information pertaining more specifically to how to use the tool or how to reason.

There are many ways this system has become much more complex and many capabilities that have seemingly "emerged" out of what can be thought of as a cloud of data connected in ways currently beyond our comprehension. Some of the best insights we've garnered so far are from Anthropic's Interpretability lab that show models showing models